You Can Make Whatever Tool You Need

You can make whatever tool you need.

A recent NY Times op-ed that a colleague pointed me to frames the current AI moment as a dangerous reckoning with narcissism, mindless acceptance of a media and technology environment that will undermine our humanity. The lament is based on McLuhan’s rendering of the idea that our tools shape us as much or perhaps more than we shape our tools. This is not McLuhan’s idea, but he says it famously. Other examples come from Plato (the Phaedrus dialogue, in which Socrates argues that writing (that amazing technology) will weaken our memories, and reading cannot bring about wisdom in the way that conversation, dialectical thinking, thinking with others, can – books can’t talk to you), Jaron Lanier (You Are Not A Gadget, highly recommend this prescient small book), and many more.

In the same vein, Heiddeger spends some time making an interesting distinction that is blurring in the age of AI. He talks about tools as things that are subordinate to human goals. The hammer is ready at hand to be used to hammer on stuff. But the true essence of things is that they are independent and that they are active in the world – they “make” the world (Heiddeger has all sorts of special vocabulary to talk about this, so how I’m paraphrasing it here is not how he wrote or spoke.)

The distinction is that we are either a) living in a world with stuff that we control, that we use to create a world, or b) we find ourselves in the world among these things that are independent of us and have their own active influence. There is a sense that we are losing control, but of course we never had it in the first place.

Anyway, what’s interesting right now is that you can make whatever tool you need if you use Claude Code or something similar. If we agree with McLuhan, Socrates, Lanier and Heidegger, the tools you make will shape you as you use them. But it also means that now you have some control over the tools that shape you, and you can make free decisions about those tools. This is a moment of learning who you are and what you are capable of.

I find this amazing. I have spent the vast majority of my life making things, building social, intellectual, technological, or physical systems. But only recently have I had complete freedom to make ideas tangible in software.

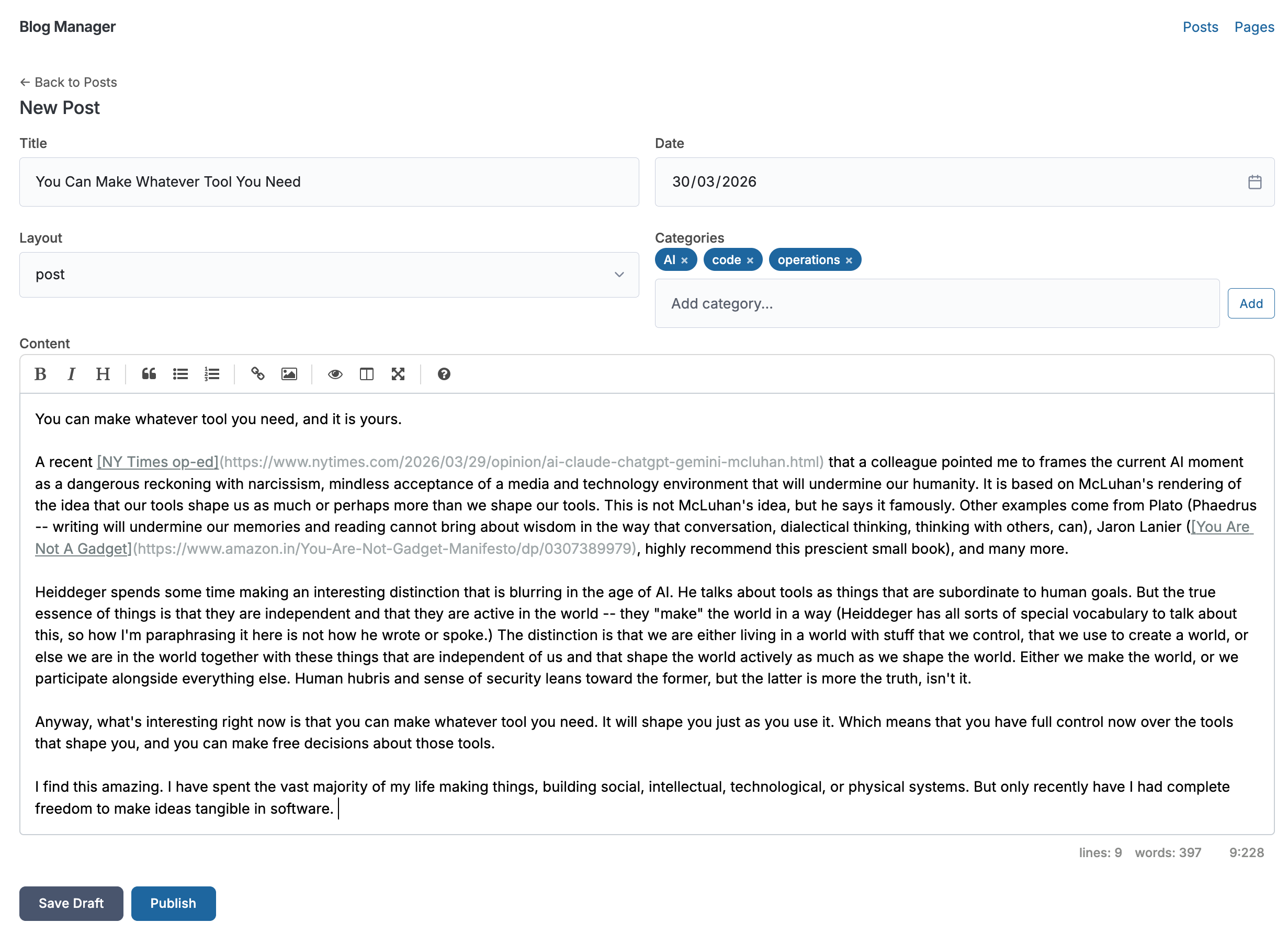

I’m writing this post on a little blog tool that I created last week. It took about 30 minutes to create. I love it. It works just the way I want and need it to. It inspires me to write again. It gets out of my way of my ideas in just the right ways.

Once people understand the capabilities of the tool-creation at their disposal (Claude Code, OpenAI Codex, Replit, Bolt, etc.), they will create novel experiences for themselves and others that magnify the opportunities to learn, connect, and shape their world. In the past month I have created two iOS apps, a Mac app, and about 20 web apps. All these are mostly just learning experiences, not publishable or shareable. (One of them is indeed shareable: it’s an online DnD style game that my son and I co-created using Claude Code and our own interest in narrative theory and world building. You can play it here: Voidspark)

But as I watch folks in organizations – schools, but also corporate – struggle to accomplish things like data visualization, transparent tracking of processes, trying to master that complex project management tool and giving up halfway because it doesn’t do the thing you need it to do… I just think, well, that era is over for me. I build the precise tools I need to accomplish my goals. We can do the same thing together as collaborative groups, organizations, schools, societies.

I have many thoughts about the security, design, and compliance implications of this situation. I am trying to design a system to govern the explosion of creative expression in systems and software that is building momentum. More on that in a future post.

A pragmatic thought. What I found that I needed to be successful, and these have held true since the beginning of the AI shift:

- Basic computer science and coding knowledge – I got this via lots of experimentation, as well as Codecademy, Coursera, and other online learning tools.

- Advanced AI tool use – I got really good at getting value from the publicly available AI resources. Again, time on task was key here. Intentional practice, experimentation, failure.

- Good taste – someone told me this is what they call it over in Silicon Valley these days. You can build amazing things, and what you really need more than strong coding skills is good taste. Therefore: invest in skills and experiences to build a sense of good taste. For me, it was travel, reading, consumption of media, and openness to new experiences.

- Good writing skills – or at least, decent writing skills. Tell AI precisely what you want and you will get better results. (But using it as a space for exploration is also illuminating, if you don’t know what you want.) You can use the AI to help you be a more precise writer.